Why GiveWell should use complete uncertainty quantification

GiveWell is a charity evaluator which distributes hundreds of millions of dollars annually to charities it estimates to be highly cost effective. It regularly publishes a quantitative cost effectiveness model, which is an influential input into its decision-making. In 2022, GiveWell launched the Change Our Minds contest, intended to incentivize interested researchers to publish critiques of the cost effectiveness model. This blogpost is inspired by an entry I co-authored with Sam Nolan and Hannah Rokebrand, which received an Honourable Mention ($5K cash prize).

In the entry, we quantify the uncertainty in each parameter of GiveWell’s model. One potential use of such quantification (which we don’t do in the entry) is to perform explicit Bayesian adjustments of the final cost effectiveness estimates, in order to correct for the Optimizer’s Curse.1 (In short, we should ‘penalize’ more uncertain estimates in favour of less uncertain estimates, even if we are risk neutral.) This blogpost shares the results of such a Bayesian adjustment, and makes arguments in favour of GiveWell adopting what I call “complete uncertainty quantification” in its cost effectiveness models.

Edit: I no longer endorse all arguments made in this post, but I appreciate that GiveWell researchers have engaged with it. I am leaving it up, unedited, as a sort of steelman of a thesis I now don’t quite agree with.

0. What is this?

This blogpost is an informally-written compilation of arguments in favour of complete uncertainty quantification. I draw mostly upon others’ recent criticisms of GiveWell, as well as some original work and arguments. The major weakness in this post is that I do not defend complete uncertainty quantification against some of the obvious objections (i.e., that choosing the right probability distributions is arbitrary, it may have communication downsides, etc.). Instead, my focus is on why the alternatives of a) not quantifying uncertainty, and b) only partially quantifying uncertainty will lead to a suboptimal allocation of philanthropic funding.

I have also written a more academic essay on post-decision surprise, which can be read here (its conclusions are discussed in this post, so you can safely skip the essay unless you are interested in the technical background).

1. Definitions

Complete uncertainty quantification: Expressing every parameter in the cost effectiveness model as probability distributions, then running repeat simulations to get a distribution of potential outcomes.2

Partial uncertainty quantification: Expressing some parameters in the cost effectiveness model as probability distributions, then running repeat simulations to get a distribution of potential outcomes.

For readers looking for a more comprehensive explanation of uncertainty quantification in general, Sam Nolan has written a step-by-step walkthrough of his uncertainty quantification of GiveWell’s analysis of GiveDirectly. Uncertainty quantification is also used by health economists. Chapters 4 and 5 of the Briggs book are an excellent explanation of conventional practice.

2. Why uncertainty quantification?

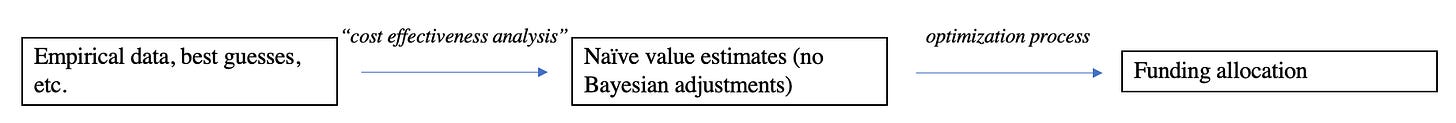

This is my rough mental model of GiveWell’s process.

Quantifying uncertainty is costly. We need some justification to do it. There are two justifications with come to mind:

Uncertainty quantification will change the mean of our naïve value estimates.3

Uncertainty quantification will allow for improvements to the optimization process.

The first reason is true. There are very slight changes to the mean cost effectiveness estimates shown in the (rough) complete uncertainty quantification I co-authored with Sam Nolan and Hannah Rokebrand. However, because the changes are so slight, it may not be a sufficient justification for quantifying uncertainty.

I’ll argue here that the second reason is more compelling. My (limited, potentially wrong) understanding of GiveWell’s optimization process, as inferred from public information, is that they order charities from most to least cost effective, then go down the list, funding each opportunity until its funding gap is closed. There are also some informal attempts at correcting for post-decision surprise (we’ll get to this concept soon), such as downwards internal validity adjustments, and not funding highly speculative charities with high naïvely-estimated cost effectiveness.

Here are two reasons why uncertainty quantification will improve GiveWell’s optimization process.

a. Bayesian adjustments for post-decision surprise may imply changes in the ranking of New Incentives among GiveWell’s Top Charities.

Below is a diagram from the essay I mention in the introduction. It was generated by the following process (also see section 3 of my essay for a three-page explanation).

Take the cost effectiveness estimates with complete uncertainty quantification created by Sam Nolan, Hannah Rokebrand, and I, then normalize them.4

Apply a Bayesian adjustment to the estimates, with a normally-distributed prior with mean 0.01 doubling-consumption-year-equivalents-per-dollar (i.e., GiveWell’s ‘units of value’ per dollar) and standard deviation 0.005 DCYE/$.

Yell in frustration at ggplot until it gives you what you want.

This is quite a rough approach. We should be fairly confident that a suitable prior on charity cost effectiveness is not normally distributed, and the naïve estimates themselves are probably not suitably modelled as normally distributed (see essay). But these assumptions make the math very convenient.

My conclusion is that having a low prior (as GiveWell seems to have) on the cost effectiveness of GiveWell’s Top Charities could imply that less funding should be directed to New Incentives. GiveWell Special Projects Officer Isabel Arjmand writes that:

…if we find that this [uncertainty] problem leads to a sufficiently large impact on the value of our allocations, it might be worth the costs of a more complicated modeling approach. We’d like to do more work to explore how big of an impact this problem has on our portfolio.

In my opinion, this sketch of explicit Bayesian adjustments changing the ordering of New Incentives renders it likely that the impact of considering uncertainty is “sufficiently large”.

b. Uncertainty quantification allows for the alternative decision rules introduced by Noah Haber in ‘GiveWell’s Uncertainty Problem’.

Haber introduces a number of potentially-superior decision rules to GiveWell’s current optimization process. These are: informally looking at the uncertainty, using a lower bound of the uncertainty interval, using a probability-based threshold with a discrete comparator (e.g., the mean of the cost effectiveness of GiveDirectly), and using a probability-based threshold with a distributional comparator (e.g., the distribution of the cost-effectiveness of GiveDirectly).

Like the Bayesian framework I use above, this too requires at least partial uncertainty quantification.

c. Uncertainty quantification allows us to calculate the value of information on a given parameter (if its uncertainty is quantified), thereby taking a systematic approach to generating a research agenda.

The GiveWell team’s intuitions about where its research hours are best spent are probably roughly reliable, but may not always be fully optimized given the information we already have. But calculating the value of information on parameters (see Nolan, Rokebrand, and Rao 2022) can help GiveWell make decisions about whether to fund an additional RCT, where to focus its desk research time, etc.

3. Why not partial uncertainty quantification?

Partial uncertainty quantification (probabilistic sensitivity analysis) has been proposed by Noah Haber, noted as promising by Isabel Arjmand, and is often used in other cost effectiveness analyses (e.g., by health economists).

My current view is that it is tempting, but wrong, to view partial uncertainty quantification as a reasonable compromise between only using point estimates of parameters, and complete uncertainty quantification.

a. Post-adjustment rankings are affected by the absolute variance in the naïve estimates, not just the relative variances.

Here is a version of the diagram shown in part 2, except where the standard deviation in each estimate is multiplied by the same constant (1/8 in the upper left panel, 1/4 in the upper right panel, 1/2 in the lower left panel, etc.).5 As more and more uncertainty is modelled, the rankings of charities change. Initially, at 12.5% of 'true' standard deviation, the ranking post-adjustment is the same as the ranking of naïve value estimates. At 25%, New Incentives falls from second-ranked to third-ranked. At 50% and 100%, New Incentives is ranked fourth among GiveWell's current Top Charities.

If GiveWell ends up using partial uncertainty quantification, special care must be taken not to systematically underestimate the uncertainty in the value estimates. Alternatively, further research may look into how what proportion of the ‘true’ s.d. (i.e., the s.d. which would have been the result of a complete uncertainty quantification) needs to be modelled until this issue can be safely ignored. For my sketch above, this seems to be <50%, but this may vary for a number of reasons.

However, I currently think that the relevance of absolute, rather than merely relative standard deviations in the naïve estimates is good reason to quantify all of the uncertainty, rather than just a proportion of it.

b. Partial uncertainty quantification risks introducing systematic biases, depending on which key parameters are chosen for quantification.

The previous argument assumes that the standard deviation in each estimate is scaled down by the same factor. However, only quantifying uncertainty in certain types of parameters could also mean that some kinds of interventions are unfairly punished in the post-adjustment rankings.

I will illustrate a potential issue that could arise through a stylized example.

Analysis of Charity A: This analysis relies on some very promising trials for the efficacy of the intervention Charity A uses. The trials have small confidence intervals, but took place in different contexts (e.g., a different city) with slightly different methods (e.g., researchers checked up on patients more often in the trial) than those used by Charity A. As such, the cost effectiveness analysis includes numerous parameters roughly adjusting the trial’s results for potential internal and external validity concerns.

Analysis of Charity B: This analysis relies on some very promising trials for the efficacy of the intervention Charity B uses. The trials have wider confidence intervals, but take place in a very similar context, using identical methods to Charity B.

In this example, if the “key” parameters selected by the charity evaluator were exclusively those with an easily defensible, objective means by which to quantify uncertainty (i.e., the confidence intervals from the trials), then Charity B might unjustifiably have a higher estimated uncertainty than Charity A. This is because the charity evaluator’s decision to focus on only some categories of parameters is punitive towards charities with higher uncertainty in those categories, and forgiving towards charities whose parameters may be highly uncertain in other categories (e.g., due to external validity or something else that escapes scientific study).

4. Conclusion

GiveWell’s analysis has an uncertainty problem. Sam Nolan, Hannah Rokebrand, and I have argued this. Noah Haber has argued this. Christian Smith has argued this. GiveWell has acknowledged this problem, and is currently exploring ways to best address it. In this post, I’ve explained why I think explicit uncertainty quantification is necessary, and why it should probably be done for every parameter in GiveWell’s model.

Smith and Winkler 2006 (https://jimsmith.host.dartmouth.edu/wp-content/uploads/2022/04/The_Optimizers_Curse.pdf)

I partially borrow this phrasing from Isabel Arjmand.

The expectation of the transformation does not equal the transformation of the expectation, unfortunately!

These estimates use a simple averaging for each Top Charity across all regions they work. This is very unlikely to be what GiveWell actually uses to make funding decisions. If the estimates were broken down into charity-country pairings, for example, we would probably find uncertainty quantification to be even more consequential, because more rankings would be changed.

Edit: The title in the lower left panel should read ‘50% of Uncertainty Modelled’.